How to A/B test an email campaign

You will learn

Learn how to set up and run an A/B test for a campaign, how to read the test results, and a few use cases for A/B testing campaigns. Klaviyo's A/B testing feature for campaigns allows you to easily test different subject lines, message content, and send times so you can better understand what works best for your audience.

Create an A/B test for campaign emails

- Navigate to Campaigns > Create campaign.

- In the sidebar, name your campaign.

- Choose Email then click Continue.

- Select the lists or segments you want to send to.

- Click Next.

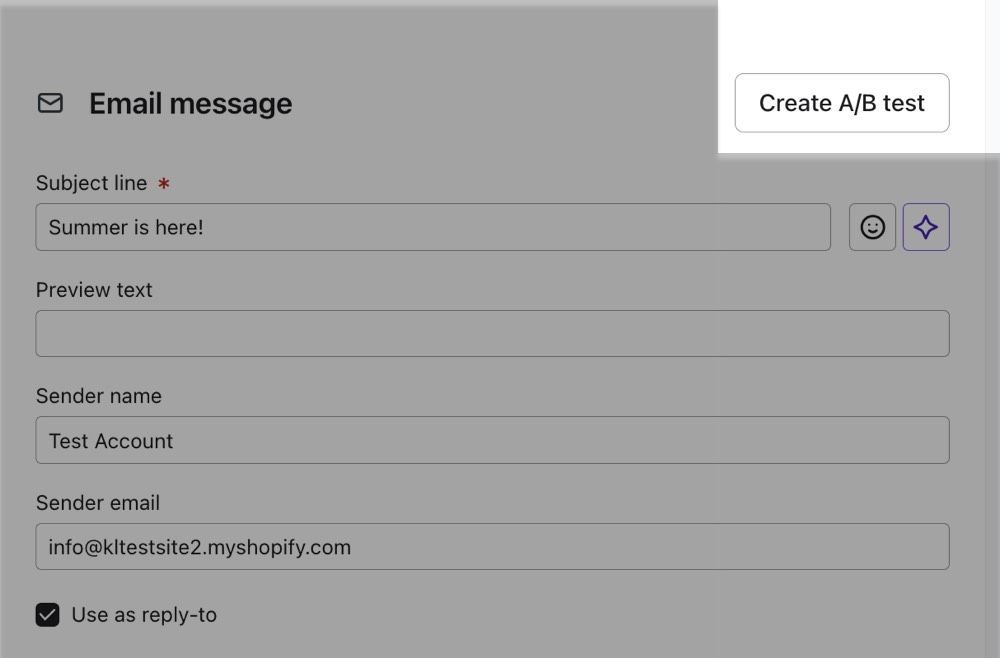

- Input the subject line and, if desired, edit the preview text, sender name, and sender email address.

- Create the first version of your email, adding your copy, images, and links.

- Above the subject line field, click Create A/B test.

- This automatically creates a second, identical variation of your campaign and brings you to the Campaign A/B test page.

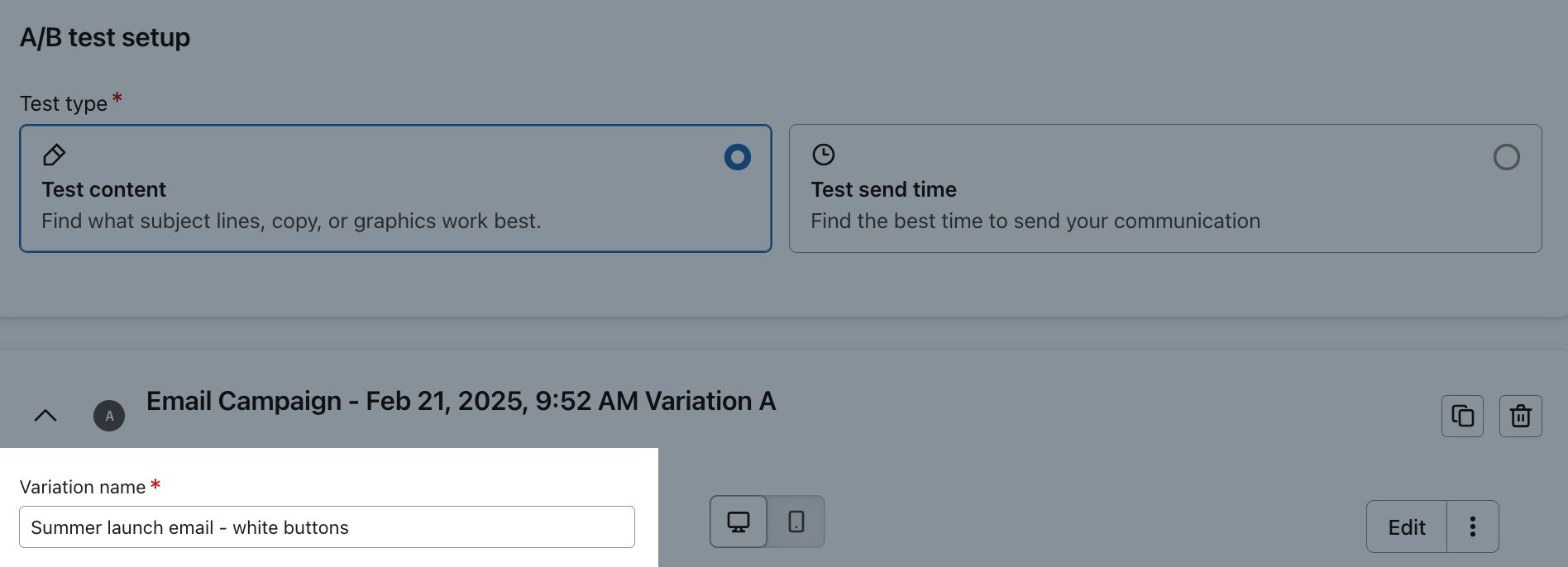

- This automatically creates a second, identical variation of your campaign and brings you to the Campaign A/B test page.

- In each Variation name field, add a descriptive variation name that references what you're A/B testing, i.e., “Summer launch email - white buttons.”

Configure your test variations

Now that you've created a test, next decide what elements within your campaign to test the performance of so you can construct the different variations of your campaign.

Test subject lines with AI

This feature is only available for paid Klaviyo accounts. If your account doesn't qualify, skip ahead to the next option: Test content.

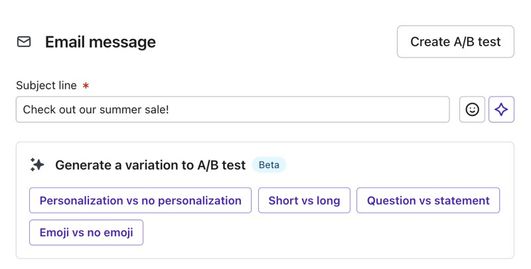

Instead of creating a test manually, you can also automate creation using AI. This feature is for testing subject lines in particular.

- Create an email campaign using the steps from the previous section.

- Choose a template and edit the content of the email.

- Underneath the Subject line field, choose one of the options in the Generate a variation to A/B test section.

After choosing an option, the AI will create a test with 2 variations using different but similar titles including your original title for variation A and one generated by the AI based on the prompt selected for variation B.

The options available will depend on the subject line you entered, but examples include:

- Personalization vs no personalization

Variation B will include the customer's first name in the subject line or remove their name if it was originally included. - Short vs. long

Variation B will either have a shorter or longer subject line depending on the length of your original subject. - Questions vs statement

Variation B will convert your original subject line into a question or into a statement if it was originally a question. - Emoji vs no emoji

Variation B will include a relevant emoji in the subject line or remove any emojis if they were originally included.

Read the rest of this article for steps on how to further edit and send your A/B test once its been created.

Test content

You can test message content to determine what your audience wants to see. Click Test content at the top of the page.

Edit one of the variations for your A/B test. It’s important for A/B tests to compare one factor at a time, so if you edit the subject line, do not change anything else. If desired, you can add more variations by clicking the Clone button on the card for a specific variation, but we recommend only 2 variations.

Test send times

You can also test send times to determine when your audience wants to hear from you. Click Test send times at the top of the page.

When testing send times, keep the content and subject lines of both variations exactly the same.

If you don't know where to start, we recommend trying an evening time. Alternatively, if you qualify, you can use smart send time instead.

Select a test strategy

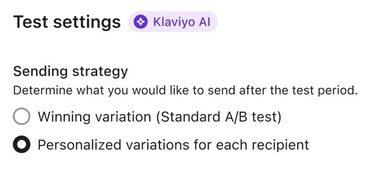

The option to choose a test strategy is only available for accounts with more than 400,000 total profiles. If your account doesn’t qualify, Klaviyo will use the winning variation test strategy.

If your account qualifies, decide on your testing strategy from the following options:

- Winning variation (Standard A/B test)

- Personalized variations for each recipient

The difference between winning variation and personalized variations

Both test strategy options will send variations to a test group composed of a percentage of the campaign’s total recipients and test message success based on a metric of your choosing, e.g., open rate. However, there are some key differences for how each strategy sends messages to the rest of the recipients after the test period is over:

- Winning variation - the default option for A/B tests, which will determine 1 winning variation and send it to the rest of the campaign's recipients after the test period is over.

- Personalized variations - uses AI to search for patterns amongst the test recipients who interact with each variation. After the testing period is over, Klaviyo will predict which variation will perform better for each recipient and send the rest of the recipients their preferred variation.

Personalize variations for each recipient

If you’d like to personalize which variation each recipient receives on an individual basis, select Personalized variations for each recipient.

Klaviyo will use information about each profile to determine which variation is most likely to succeed in converting that profile. This profile information includes, but is not limited to:

- Historical engagement rates

- Customer lifetime value (CLV)

- Location

For example, if you have chosen open rate as your winning metric and profiles in the test group with a CLV of 100 or more open variation A more frequently, but profiles with a CLV of less than 100 open variation B more frequently, the rest of the campaign's recipients will receive emails based on which variation they are most likely to open according to their CLV. This is a simplified example, as Klaviyo will use many data points to determine personalized variations.

Configure A/B test settings

After creating your content, decide the size of your testing pool and the length of your testing period.

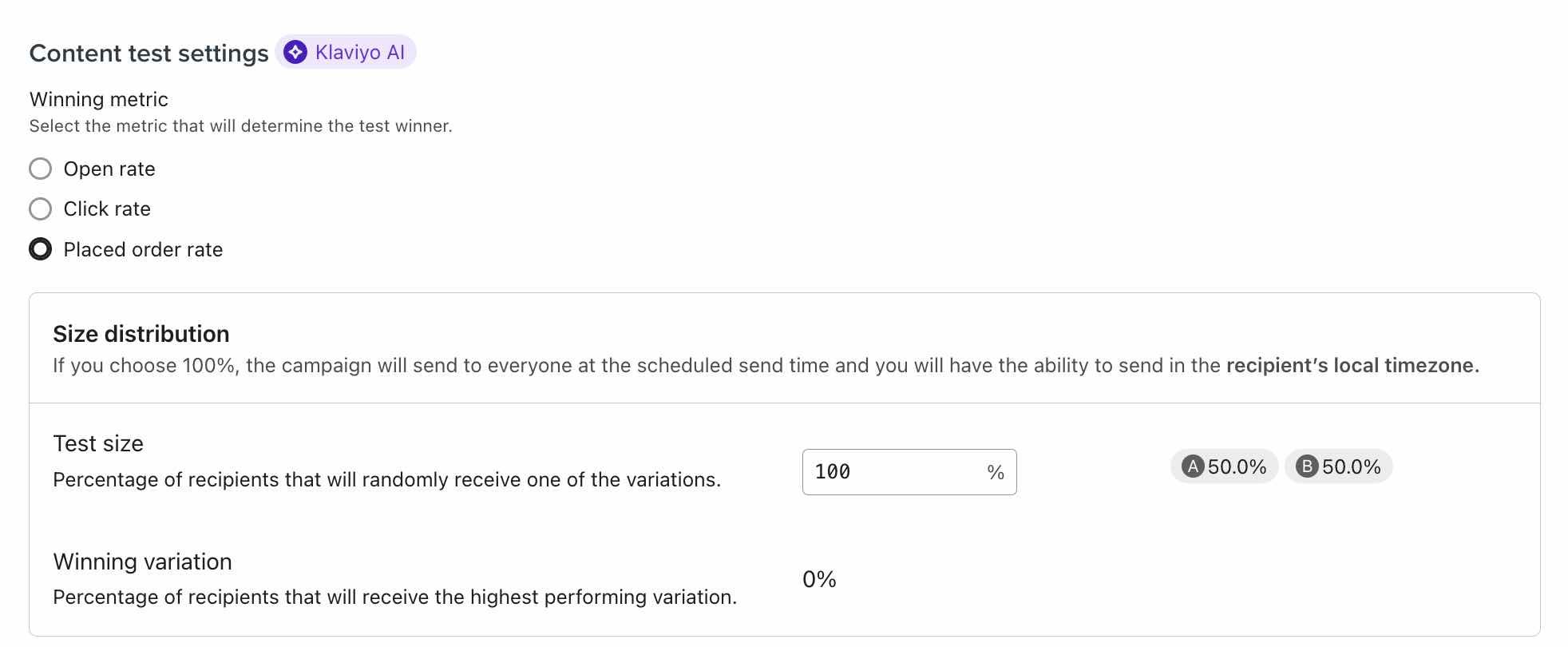

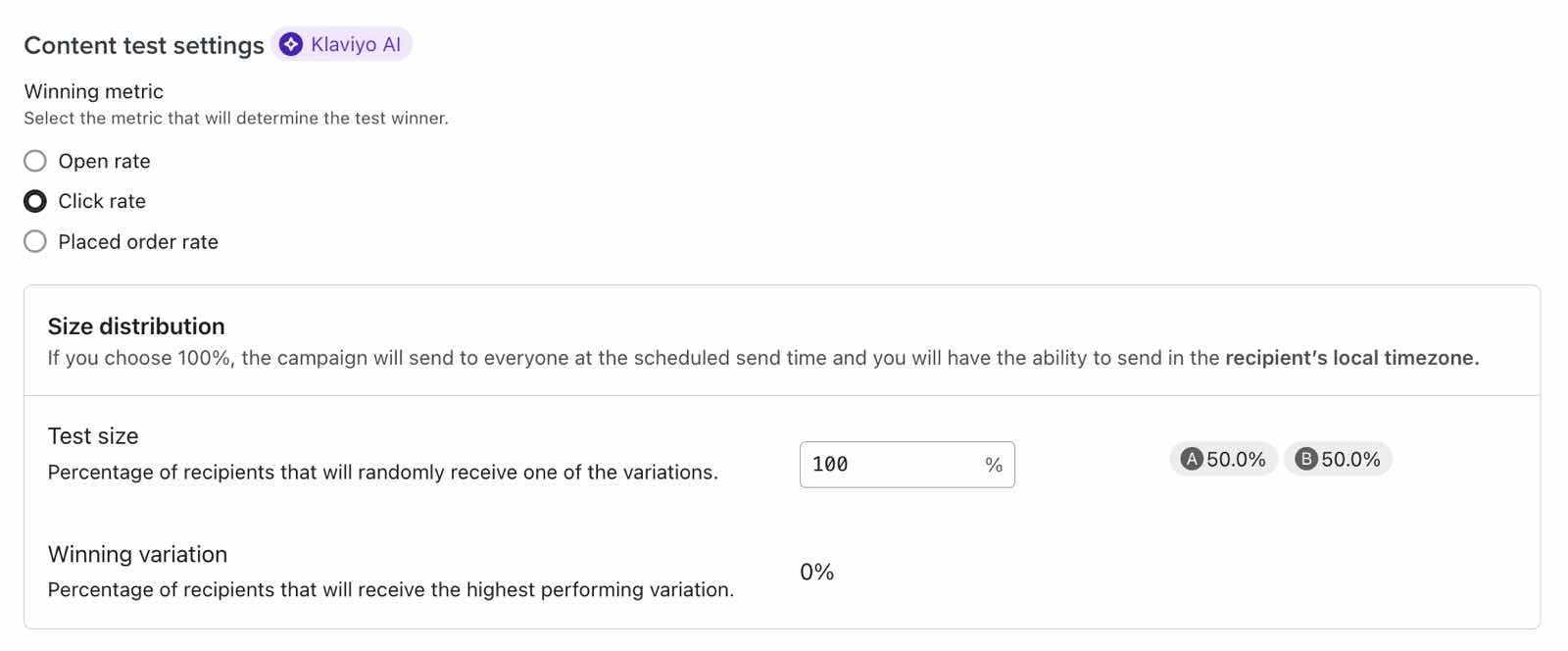

First, choose the winning metric, which is the main metric you want to see improve. Choose from:

- Open rate

Recommended when testing subject line, preview text, or sender name and email address. - Click rate

Recommended when testing email content, like button size or color. - Placed order rate

Only available for accounts with a Placed Order metric and not available for personalized variations. This is recommended when testing email content, like whether displaying best sellers or new items leads to more conversions.

For Apple devices using iOS15, macOS Monterey, iPadOS 15, and WatchOS 8, or newer releases, Apple Mail Privacy Protection (MPP) changed the way that we receive open rate data on your emails by prefetching our tracking pixel. With this change, it’s important to understand that open rates may be inflated.

With regard to A/B testing, Klaviyo should account for these inflated open rates; however, you may need a higher threshold to reach statistical significance. If you regularly conduct A/B testing AND have greater than ~45% of opens on Apple Mail, we suggest creating a custom report that includes an MPP property. You can also identify these opens in your individual subscriber segments.

For complete information on MPP opens, visit our iOS 15: Everything you need to know about Apple’s changes guide.

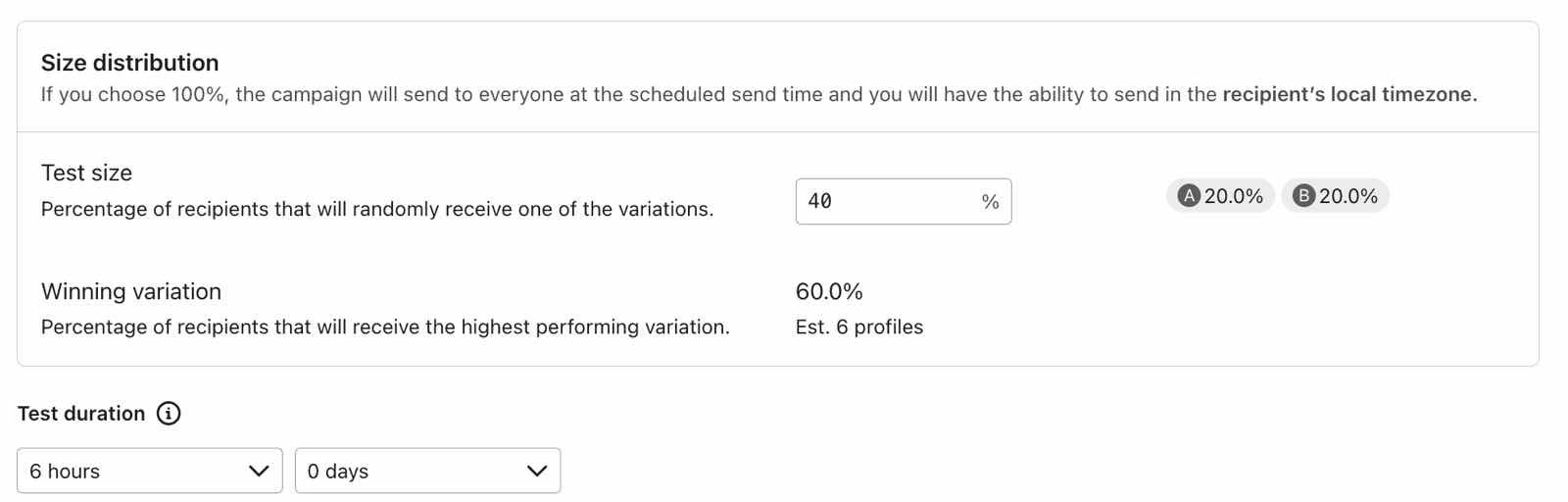

We recommend a test size based on your list size and a test duration based on the A/B test metric (i.e., opens, clicks, or placed orders). Use the slider bar to change the test size if desired. If the test size is less than 100%, you also have the option to adjust your test duration.

For the example below, 20% of the campaign’s recipients will get variation A, and another 20% will get variation B. Depending on how many of these recipients open, click, or place an order (depending on the winning metric you selected), a winner will be chosen after 6 hours, and the rest of the recipients will receive the winning variation.

After you adjust the test’s settings, click Continue to review.

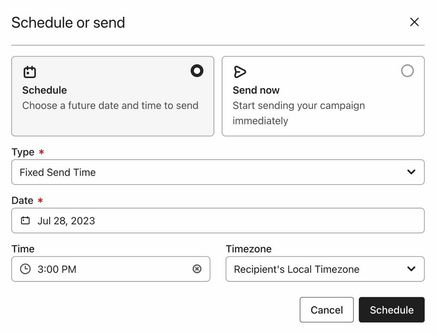

If you are A/B testing content and would like to send your message based on each recipient’s timezone:

- Set your test size to 100%.

- Then, when scheduling your campaign, you’ll have the option to choose Recipient’s Local Timezone as the timezone for your send.

What to A/B test

You should only test one variable at a time. Testing multiple variables simultaneously can make it difficult (or impossible) to identify which variables contribute to improved performance. Not sure what to test? Click on the sections below to view starting points.

Tests to improve your placed order rates

To see what drives your recipients to place orders, try testing any of the following in your messages:

- Social proof (e.g., including positive reviews or customer social media posts in your messages)

- Send time, day of week, or weekends versus weekdays

- Call to action (CTA) placement (e.g., near the top of your email or lower down)

Tests to improve your click rates

To see what drives your recipients to click on links in your messages, try testing any of the following:

- CTA appearance (e.g., the font, color, or size of your button or text)

- CTA text (e.g., "Shop now" versus "Check it out!")

- Template organization (e.g., how you arrange the images and text in your message)

- Images versus GIFs

Tests to improve your open rates

To identify what prompts your subscribers to open your emails, try testing any of the following:

- Subject line tests:

- Statements versus questions (e.g., “You’ve gotta see our latest drop” versus “Want to see our latest drop?”)

- Subject line length

- Subject line personalization (e.g., including the recipient’s first name in the subject line using the {{ first_name|default:’Friend’ }} tag)

- Emojis in subject lines

- Preview text (e.g., including versus not including preview text)

- From name (e.g., your company name compared to a more personal From name, like “Elise at Klaviyo”)

- Send time, day of week, or weekends versus weekdays

Review A/B test results and select a winner (optional)

Navigate to the campaign you are testing to view its progress and see which version has performed better. If the campaign is still sending and you'd like to end an ongoing A/B test:

- Navigate to the A/B Test Results tab of an ongoing campaign.

- Click the additional options menu next to the version you'd like to choose.

- Click Choose as Winner.

When you manually select a winner, anyone who hasn't yet received the campaign will receive the winning variation immediately. If your A/B test size was 100% of recipients, we will continue collecting data throughout the conversion window for the message, so the winner may change as new data comes in. For more information, check out How to review your email A/B test results for campaigns.

Additional resources

- How to A/B test a flow email

Learn how to use Klaviyo’s A/B testing experience to test individual emails in a flow, including things like subject line, discounts, and email content, etc. You can even use A/B tests to determine if plain-text emails perform better than image-heavy HTML emails.

- How to A/B test SMS campaigns

Learn how to A/B test SMS campaigns and what aspects to test in this article.

- Mastering Email A/B Testing: Klaviyo’s Proven Tips & Tricks to Boost Revenue